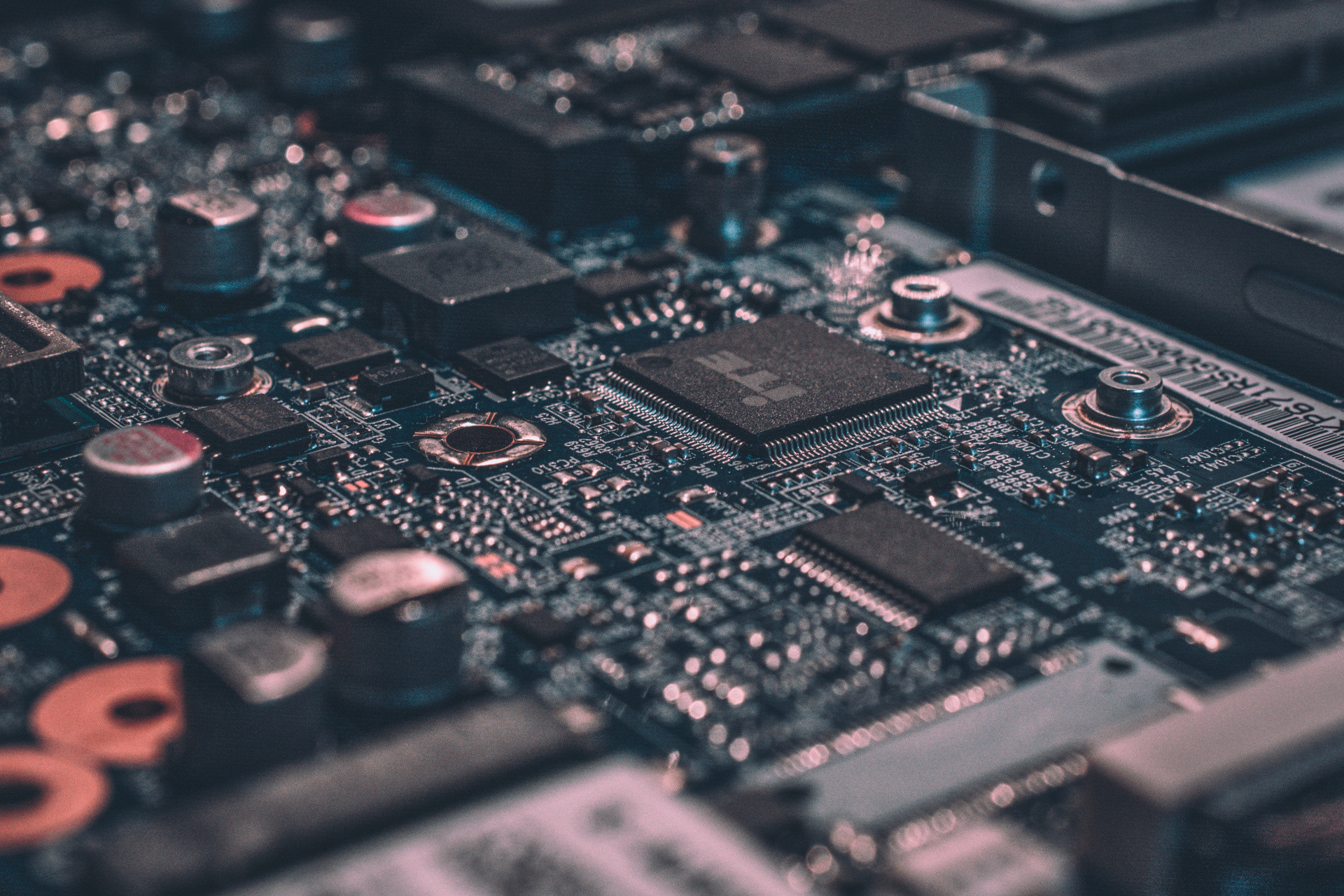

Smart Fast Secure

Gyver has been providing smart technologies, fast connections, and secure network designs for over 15 years and we aren’t slowing down.

Let our team combine best-in-class technologies to level-up your organization’s speed, connectivity, and security.